If you’ve defined joins for any length of time, you’re likely familiar with left, inner and full joins, and perhaps even right joins (opposite of a left join). But these aren’t the only kinds of joins out there. There are several others which, while their names may not be as familiar, still have practical applications for real-life business scenarios. Let’s explore several of these, including how to do them in Power Query!

Continue readingTag Archives: Power Query M

Less GUIDs: Relative Current Workspace & Items By Name

Power Query dataflows like to use GUIDs to reference workspaces, lakehouses and warehouses. With a GUID reference, you are authoritatively referencing a specific resource, which is good…but working with GUIDs can entail complications, both for humans and CI/CD, which is not so good.

I know what lakehouse “SomeLakehouse” is, but I’ll have to look up what lakehouse “fb7322d6-781e-4e0a-97b1-1eec69a5547b” is. On the source control side, I find that, due to how GUIDs tend to be intermixed with other code in Power Query, Git often incorrectly wants to merge dev workspace GUIDs to test, or test-specific GUIDs to prod. If not caught by careful human PR/merge review, such inadvertent merges leads to bad things happening.

Can we use names instead of GUIDs? That would resolve at least part of the challenge. Our code could refer to “SomeLakehouse” instead of a GUID (nice!).

However, switching from GUIDs to names still leaves the need to update workspace references when a dataflow is promoted across workspaces (e.g. from dev -> test -> prod.) Is there a way to reference the current workspace, regardless of its name?

The answer is yes to both! Using names instead of GUIDs isn’t new, but hasn’t often been done. On the other hand, relative workspace references is a brand-new, in preview feature.

Let’s explore both, in code!

Continue readingPower Query: Choice Values for Power Apps/Dataverse Picklist and Boolean Columns

How do you query out the possible label values of the choice columns (a.k.a. picklists or option sets) in a Dataverse/Power Apps table (entity) using Power Query?

As part of building a dimension table, here’s what I came up with to answer this question. In addition to working with picklist columns, with a slight tweak, the below can be used to fetch the textual label values for true/false column choices (e.g. for a given column, maybe true is configured to show as “Yes”, and false as “No”).

Sharing this so it can hopefully help the next person with the same need…and so that I can remember how to get this information if I need it again down the road. 🙂

Continue readingTable Interceptors

Have a table, but want to change—to override or augment—some of its behaviors? Not a technique normally needed in day-to-day Power Query, but perhaps useful in certain special scenarios.

Imagine that you are pulling a table containing many product rows from a data source. All works well, except for one performance challenge: Counting rows (e.g. Table.RowCount) is slow because the data connector doesn’t query fold the row count. Instead, Power Query is fetching all the rows in the table in order to count them locally, and this takes a while.

You know that there’s an API endpoint, /product/count, that can be called which will immediately return the count of products. If only you could use the existing data connector, which you are otherwise happy with, and just override how row counting is handled for this table so that it uses /product/count….

Column Types Don’t Matter, or Do They?

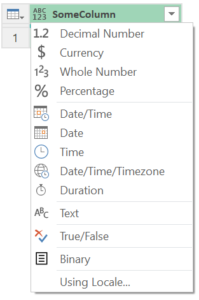

Power Query’s column types can be confusing. You can create or ascribe a column with any type—regardless of the kind of values in the column—and Power Query won’t complain, nor will the values in the column be transformed to the specified type. On the other hand, use Power Query’s “change type” functionality and the chosen column type does have an impact, causing values to be converted, as appropriate, and raising validation errors when that can’t be done.

Why does Power Query only sometimes care about column types? How do we make sense out of the seemingly contradictory behavior where types for columns matter when specified in one place (change type) but not in others (creation, ascription)?

Continue readingType Equality

Type equality is an advanced—and also confusing—Power Query topic. Sometimes, equality comparisons between type values seem to work as intuitively expected; other times, they may not. What’s going on? Is it okay—is it safe—to use the equals operator between type values?

But first: Why the confusion around this subject? The M language specification lays out a brief set of rules for type equality which define the minimum expected behavior. These may then be augmented by additional rules specific to the Power Query implementation you are using. Grasping the full implications of the former isn’t always intuitively obvious and there is almost nothing publicly written documenting the latter—resulting in Power Query type equality being a confusing, little understood advanced niche.

Let’s try to clear things up. Let’s explore the behaviors of Microsoft’s flagship Power Query mashup engine implementation (i.e. the Power Query that ships with Microsoft Power BI and Excel). We’ll examine both behaviors mandated by the M spec (specifically, the relevant subsections of “Types” and “Operators“) and additional ones that are specific to the flagship PQ implementation.

Let’s get started!

Continue readingRounding: Power Query vs. Others

Did you know that, by default, Power Query may round numbers differently than you learned in grade school? Unlike DAX, Microsoft Excel and Microsoft SQL Server, which use commercial rounding as their main or default approach to rounding, Power Query is different: it defaults to banker’s rounding.

What’s the difference between these two ways to round? What if you don’t want Power Query’s default—can you override it? What if you don’t ever intentionally round numbers, does this topic even matter to you? (Hint: Possibly yes!)

Let’s explore some details and options.

Continue readingWhat’s With WriteToDataDestination?

If you examine the refresh details for a Power Query gen2 dataflow, you’ll notice that the activity names which are displayed are identical to the query names in your dataflow except that each has a “_WriteToDataDestination” suffix appended to it. What’s going on with this?

Let’s see what we can uncover! Time for some sleuthing….

Continue readingExploring Power Query Buffering: How Table.Buffer and List.Buffer Work

Table.Buffer and List.Buffer buffer data—but how do they work? How deeply do they buffer? How do they handle errors? And, for that matter, when do they populate?

In a nutshell: Table.Buffer creates a stable copy* of a table’s rows. These rows are fetched only once from the source, regardless of how many times they are read from the buffer. Each time the buffer is accessed, the same* rows are returned in the same order. With List.Buffer, the behavior is identical, except it is list items that are buffered instead of table rows. (*But this may not mean what you think, so keep reading.)

The details have some nuances to them. Let’s explore them, as well as what happens when errors are encountered.

Continue readingTime Values from Dataflow to Power BI via DirectLake

Seems simple enough: The table, produced by your Power BI gen2 dataflow, contains a time column. You want to output this table to OneLake, then have Power BI read from it using a DirectLake-powered semantic model.

Sounds easy, but not so fast! When you try to set up the dataflow’s output mappings, columns of type time are not supported for OneLake destinations. Ouch!

So, what do you do?

Try leveraging the fact that Power BI’s time values are, behind the scenes, actually datetime values. For proper “type time” behavior, Power BI expects that the date part of each datetime value is set to Power BI’s epoch date (the date it considers day 0, which is 1899-12-30) and it also needs to know that the column is expected to have time behaviors.

Continue reading